For all the links I didn't read

There is a screenshot on my computer I return to when I need to remember why I built this.

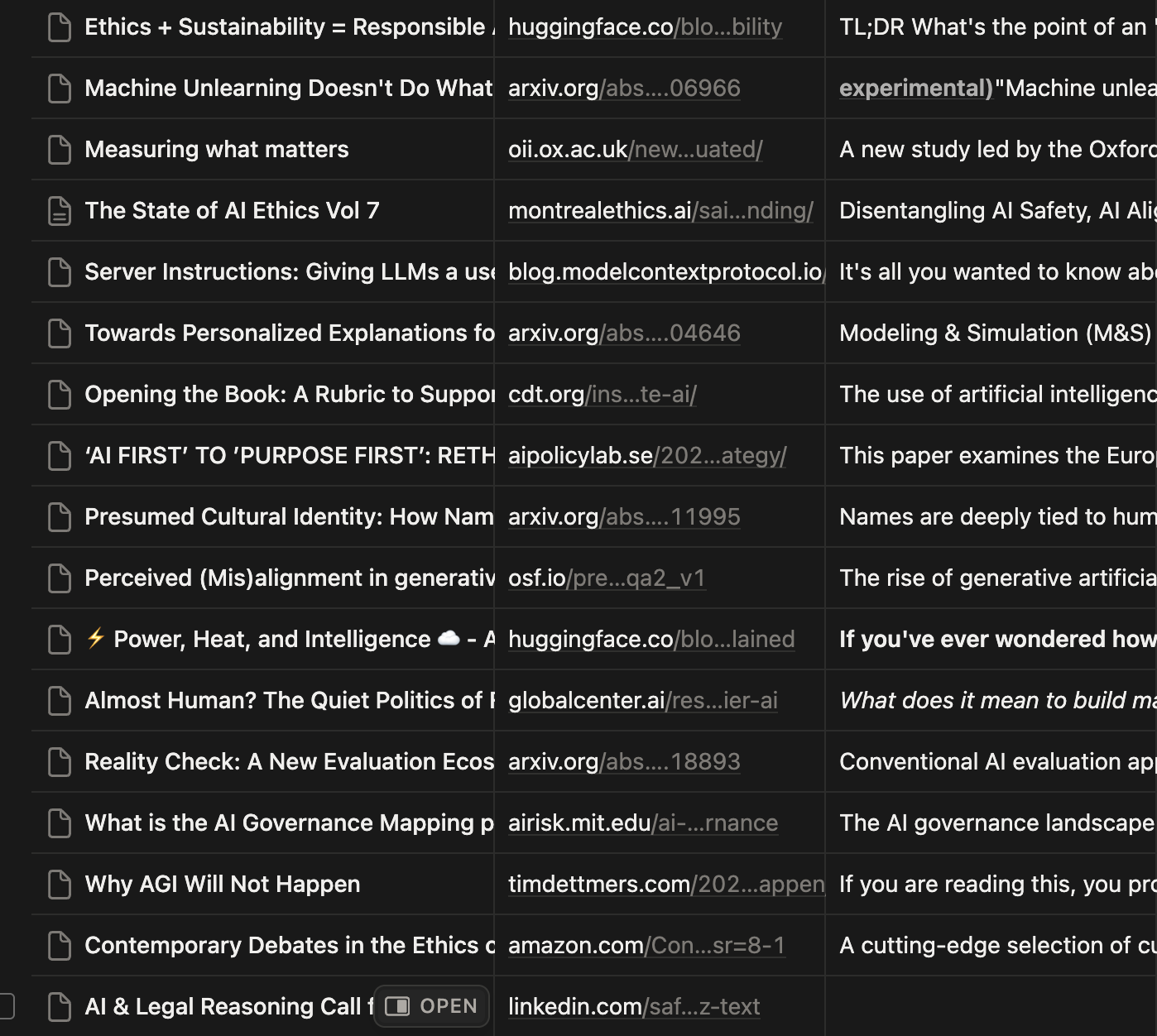

It is a Notion database with more than 340 entries. I've never opened most of them.

I truly meant to read each one: arxiv preprints, policy documents, AI governance papers from three continents. "Machine Unlearning Doesn't Do What You Think." I find useful, interesting links like these strewn across all kinds of threads.

I did not return to most of them. Mainly because I couldn't find them again.

A familiar pattern?

If you're anything like me, you're on LinkedIn sometimes, and an interesting paper would be announced. It's the most exciting thing in your field! But it's also linked to in the comments.

Weeks later, in a real conversation about AI governance, I would try to get back to the thing I saw. You know what happened next.

Nothing.

What I tried

I tried everything. I put stuff in Notion. I put stuff in Pocket.

Eventually, I just gave up. That did not work either. I could not predict, when I saved something, what would matter later. A paper on AI procurement for healthcare seemed important in January. But I really needed it in March.

So here we are.

With Link In Comments, every link is read and summarized automatically.

I can forward them to an email, save them with a bookmarklet, or paste them in the dashboard.

What the table taught me

The Notion table still exists. I have not deleted it. It reminds me of why I built this.

To solve my own problem.

Your saved links might not be AI safety papers. They might be recipes, or architecture, or long reads you bookmarked. I hope this helps you too.

Adrianna

Founder, Link In Comments